116,000 Stars in 8 Weeks: Four Teams Rewrote OpenClaw — Here's What the Code Says

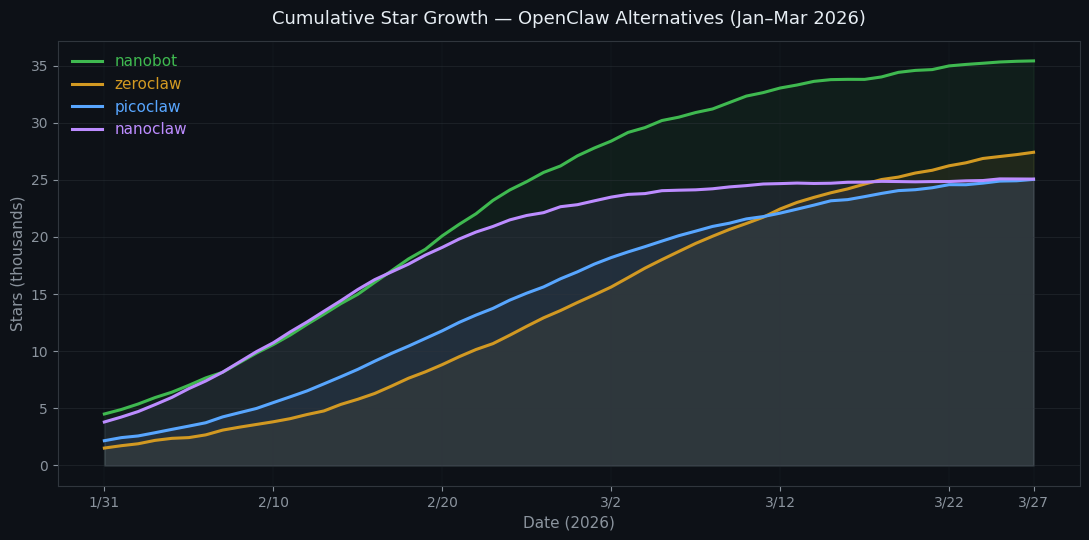

Four teams rewrote OpenClaw from scratch in Python, Rust, Go, and TypeScript — accumulating 116K stars in 8 weeks. We read the code and analyzed the fork ratios. Here's what they reveal about who's building a product vs. a platform.

Four teams. Four languages. Eight weeks. 116,000 stars. Each one a from-scratch rewrite of OpenClaw — the 336K-star personal AI assistant that went from zero to GitHub history in four months.

Not forks. Fresh implementations, each with a different bet on what OpenClaw got wrong.

TL;DR: OpenClaw (336K ⭐, TypeScript) spawned 4 independent rewrites in 8 weeks: nanobot (36K ⭐, Python — "auditable in an afternoon"), ZeroClaw (29K ⭐, Rust — "runs on $10 hardware"), PicoClaw (26K ⭐, Go — "one binary, any architecture"), and NanoClaw (25K ⭐, TypeScript — "fork it, ship your own"). The fork ratios reveal who's building a product vs. who's building a platform. Here's our analysis of the top OpenClaw alternatives in 2026.

Disclosure: OSSInsight is built by PingCAP. We have no affiliation with any of the projects analyzed in this post. All data is from public GitHub APIs.

| Repository | Language | Stars | Created | Contributors |

|---|---|---|---|---|

| HKUDS/nanobot | Python | 36,283 | Feb 1, 2026 | ~204 |

| zeroclaw-labs/zeroclaw | Rust | 28,790 | Feb 13, 2026 | ~215 |

| sipeed/picoclaw | Go | 26,142 | Feb 4, 2026 | ~187 |

| qwibitai/nanoclaw | TypeScript | 25,489 | Jan 31, 2026 | ~62 |

Four different languages. Four different origin stories. Four different answers to the same question: What did OpenClaw get wrong? (Data as of March 26, 2026.)

We read the code. Here's what they each believe. (Compare them head-to-head on OSSInsight →)

Why Now? The Context First.

OpenClaw's README calls it "Your own personal AI assistant. Any OS. Any Platform." The tagline is accurate — and that's the problem.

At ~335 MB repo size, running on Node.js with a runtime footprint that can exceed 1 GB, OpenClaw is designed to run on a Mac Mini or a cloud VM. It has 15,600+ open issues. It's a large piece of software solving a large problem.

But a personal AI assistant doesn't need to solve a large problem. For a lot of people, it needs to:

- Run on cheap hardware

- Start instantly

- Be understandable enough to modify

OpenClaw does all three of these things poorly — by design. Its size is the cost of its strengths: 800+ skills, 50+ channel integrations, battle-tested memory management, and an ecosystem that none of these alternatives have replicated. The forks emerged to address specific gaps, but they disagree on which gap matters most.

Nanobot: The "Provable Simplicity" Bet

HKUDS/nanobot comes from the Data Intelligence Lab at the University of Hong Kong (HKU). Its headline claim:

⚡️ Delivers core agent functionality with 99% fewer lines of code than OpenClaw.

They mean it. The repo includes a shell script — core_agent_lines.sh — that you can run yourself to verify the count. When we ran it:

nanobot core agent line count

================================

agent/ 1,664 lines

agent/tools/ 1,899 lines

bus/ 88 lines

config/ 433 lines

cron/ 484 lines

heartbeat/ 192 lines

session/ 273 lines

utils/ 399 lines

(root) 14 lines

Core total: 5,551 lines5,551 lines for the core agent. That's actually auditable by a single developer in an afternoon.

A caveat: the "99%" claim counts only the core agent. Channels, CLI, providers, and skills add another 25,000+ lines, putting the full codebase closer to 30K lines — still ~89% smaller than OpenClaw, but not 99%. The agent brain — the part that thinks, schedules, remembers, and decides — is genuinely 5,551 lines of Python.

This design philosophy has a name: radically transparent defaults. If something goes wrong with nanobot, you can actually read the code that caused it. With OpenClaw's 270,000+ lines across the equivalent scope, that's rarely true.

Nanobot's adoption curve reflects this: 36,283 stars with a fork ratio of 0.171 — close to OpenClaw's 0.196. People aren't just starring it; they're building on it. The 204 contributors (despite Python's lower "coolness factor" than Rust) tells you the community is engaged, not just interested.

The recent supply chain incident (they removed litellm dependency after a poisoning advisory) shows the tradeoff goes both ways: fewer dependencies doesn't mean zero risk — the ones you keep still need vetting.

ZeroClaw: The "Real Hardware" Bet

zeroclaw-labs/zeroclaw makes a different bet entirely. Their claim:

⚡️ Runs on $10 hardware with <5MB RAM: That's 99% less memory than OpenClaw.

The team — "students and members of the Harvard, MIT, and Sundai.Club communities" — wrote the whole thing in Rust. At 270,000+ lines across 367 Rust files, ZeroClaw is not small. But it's small at runtime.

The benchmark table in their README is the kind of thing that gets shared:

| Metric | OpenClaw | Nanobot | ZeroClaw |

|---|---|---|---|

| RAM | >1 GB | >100 MB | <5 MB |

| Startup (0.8GHz) | >500s | >30s | <10ms |

| Binary Size | ~28 MB dist | N/A | ~8.8 MB |

| Target Hardware | Mac Mini $599 | Linux SBC ~$50 | Any board $10 |

(From ZeroClaw's README, measurements on macOS arm64. These are self-reported benchmarks and haven't been independently verified — the >500s startup claim for OpenClaw in particular seems extreme and may reflect a cold-start edge case rather than typical usage.)

What surprised us was the /firmware directory — ZeroClaw ships actual firmware for Arduino, ESP32, Nucleo, and Pico boards. This is not a web app with embedded ambitions. This is an embedded project that also runs on your laptop.

The memory architecture is particularly thoughtful. ZeroClaw implements time-decay scoring for non-core memories, with a 7-day half-life:

// From src/memory/decay.rs

// Decay formula: score * 2^(-age_days / half_life_days)

// Core memories are evergreen — never decay

if entry.category == MemoryCategory::Core {

continue;

}

let decay_factor = (-age_days / half_life * std::f64::consts::LN_2).exp();

entry.score = Some(score * decay_factor);They also built a knowledge graph on top of SQLite with typed relationship edges (Uses, Replaces, Extends, AuthoredBy, AppliesTo) — which is genuinely novel for an embedded assistant.

The fork ratio of 0.138 is the lowest in the group. People are running ZeroClaw, not forking it. That makes sense: if you're deploying to an ESP32, you're not customizing the Rust. You're using the binary.

PicoClaw: The "Go Everywhere" Bet

sipeed/picoclaw comes from Sipeed — the Chinese maker hardware company known for affordable RISC-V boards. Where ZeroClaw targets Raspberry Pi and ESP32, PicoClaw targets Sipeed's own ecosystem: K210 chips, Tang Nano FPGAs, MAIX boards.

The bet is on Go's cross-compilation story. At 89% Go code and 26 MB repo size, PicoClaw produces a single native binary for any architecture — simpler than ZeroClaw's Rust (no borrow checker), more portable than nanobot's Python (no runtime dependency). With 187 contributors in ~50 days, nightly releases, and the lowest issue ratio in the group (0.013 — less than a quarter of OpenClaw's), PicoClaw is shipping fast and breaking little.

NanoClaw: The "Platform" Bet

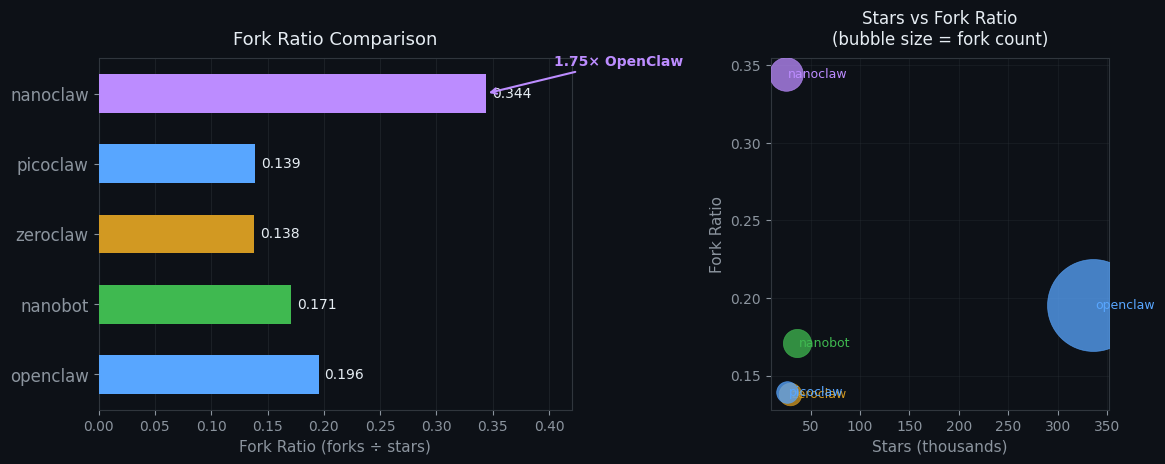

qwibitai/nanoclaw is the outlier — and it's where the most interesting signal lives.

Its description: "A lightweight alternative to OpenClaw that runs in containers for security. Connects to WhatsApp, Telegram..."

Container-first. TypeScript. 93% TS code. Built by a ~62-person contributor team (the smallest in the group). But look at this number:

Fork ratio: 0.344

NanoClaw has 8,778 forks against 25,489 stars. Compare this to the others:

| Project | Stars | Forks | Fork Ratio |

|---|---|---|---|

| openclaw/openclaw | 336,105 | 65,718 | 0.196 |

| HKUDS/nanobot | 36,283 | 6,210 | 0.171 |

| sipeed/picoclaw | 26,142 | 3,642 | 0.139 |

| zeroclaw-labs/zeroclaw | 28,790 | 3,984 | 0.138 |

| qwibitai/nanoclaw | 25,489 | 8,778 | 0.344 |

NanoClaw's fork ratio is 1.76x higher than OpenClaw's and 2.5x higher than ZeroClaw's.

That's not people using nanoclaw. That's people using nanoclaw as a base to build their own thing.

The high fork ratio with low contributor count (~62 vs 200+ for the others) is a specific pattern: architectural simplicity that invites extension. When a codebase is simple enough to fork and modify but not well-supported enough to contribute back to, the forks proliferate.

A fair counter-argument: high fork ratios in young repos can also reflect tutorial-style forking or early-adopter behavior rather than genuine customization. We'd need to revisit this metric in 6 months to confirm the pattern holds. But the combination of high forks and low contributors is harder to explain away — if people were just forking to learn, you'd expect more of them to contribute back.

NanoClaw at 11 MB (the smallest of the group) and TypeScript-native is the entry point for developers who want to quickly build a specialized assistant without understanding Rust or caring about 5MB memory footprints. The container-first approach means it runs cleanly in CI, staging environments, and cloud functions.

We'd bet the majority of NanoClaw's 8,778 forks are customized personal assistants that will never be merged back. That's not a failure mode — it's the intended design.

The Pattern: Four Theories of What "Lightweight" Means

These four projects each encode a different theory of what was wrong with OpenClaw:

Nanobot's theory: OpenClaw is too big to understand. Fix: Prove simplicity by counting lines.

ZeroClaw's theory: OpenClaw requires too much hardware. Fix: Run it in 5 MB on a $10 board.

PicoClaw's theory: OpenClaw is too platform-specific. Fix: Compile to any architecture in a single Go binary.

NanoClaw's theory: OpenClaw is too hard to customize. Fix: Container-first, TypeScript, fork freely.

What's striking is that all four theories are correct — for different users. That's what the data confirms. Each project has found its audience (187–215 contributors for nanobot, picoclaw, and zeroclaw; fork-dominant behavior for nanoclaw) without cannibalizing the others.

The Insight Nobody Else Is Measuring: Issue Ratio vs. Fork Ratio

Here's a diagnostic we think is underused: the issue-to-star ratio and fork-to-star ratio together tell you whether a project is being used, built upon, or talked about.

| Pattern | Issue Ratio | Fork Ratio | What It Means | Example |

|---|---|---|---|---|

| 🔴 High issues, low forks | 0.047 | 0.196 | People run it but complain | OpenClaw |

| 🟢 Low issues, low forks | 0.012 | 0.138 | People deploy and forget | ZeroClaw |

| 🟣 Low issues, high forks | 0.021 | 0.344 | People use it as scaffolding | NanoClaw |

| 🟡 Medium both | 0.022 | 0.171 | People actually engage | Nanobot |

None of these is "better" — they're different products serving different users. But if you're evaluating which project will be around in 2 years, the engagement pattern predicts maintainability better than raw stars ever could.

What This Means If You're Choosing

If you want the full-featured assistant and have the hardware: OpenClaw. 336K stars, 15K issues, and a massive skill ecosystem aren't going anywhere.

If you want to read and understand every line of your AI's brain: Nanobot. 5,551 lines of auditable Python.

If you're deploying to embedded/IoT hardware: ZeroClaw. Nothing else runs a knowledge graph in 5 MB of RAM.

If you need a single cross-platform binary with no runtime: PicoClaw. Go's cross-compilation is unbeatable here.

If you want a quick base to fork and customize: NanoClaw. The 8,778 forks tell you the template works.

The Bigger Picture

None of these will replace OpenClaw — they're not trying to. OpenClaw's 336,000 stars represent a specific user: developer on a MacBook who wants a personal AI assistant they can extend. These four projects are carving adjacent markets that OpenClaw chose not to serve.

But here's what matters: the competitive pressure is already making OpenClaw better. ZeroClaw's SQLite-only approach preceded a similar discussion in OpenClaw's issue tracker. Nanobot's supply chain incident prompted security reviews across the ecosystem.

The real bet worth watching is NanoClaw's fork-dominant model. If those 8,778 forks produce 10–20 specialized assistants that each find audiences, we'll see a Cambrian explosion of narrow-purpose AI agents built on a shared TypeScript skeleton. That's not fragmentation — that's an ecosystem forming.

And that's what 116,000 stars in 8 weeks actually means: not that OpenClaw is losing, but that personal AI assistants just became a category.

Data collected via GitHub API, March 26, 2026. Contributor counts are estimates from paginated API responses. Fork ratios calculated from public repo metadata. Code analysis from git clone --depth 1 of each repository.

Explore more: