The Rust Shift: How GitHub's AI Agent Infrastructure Changed Languages

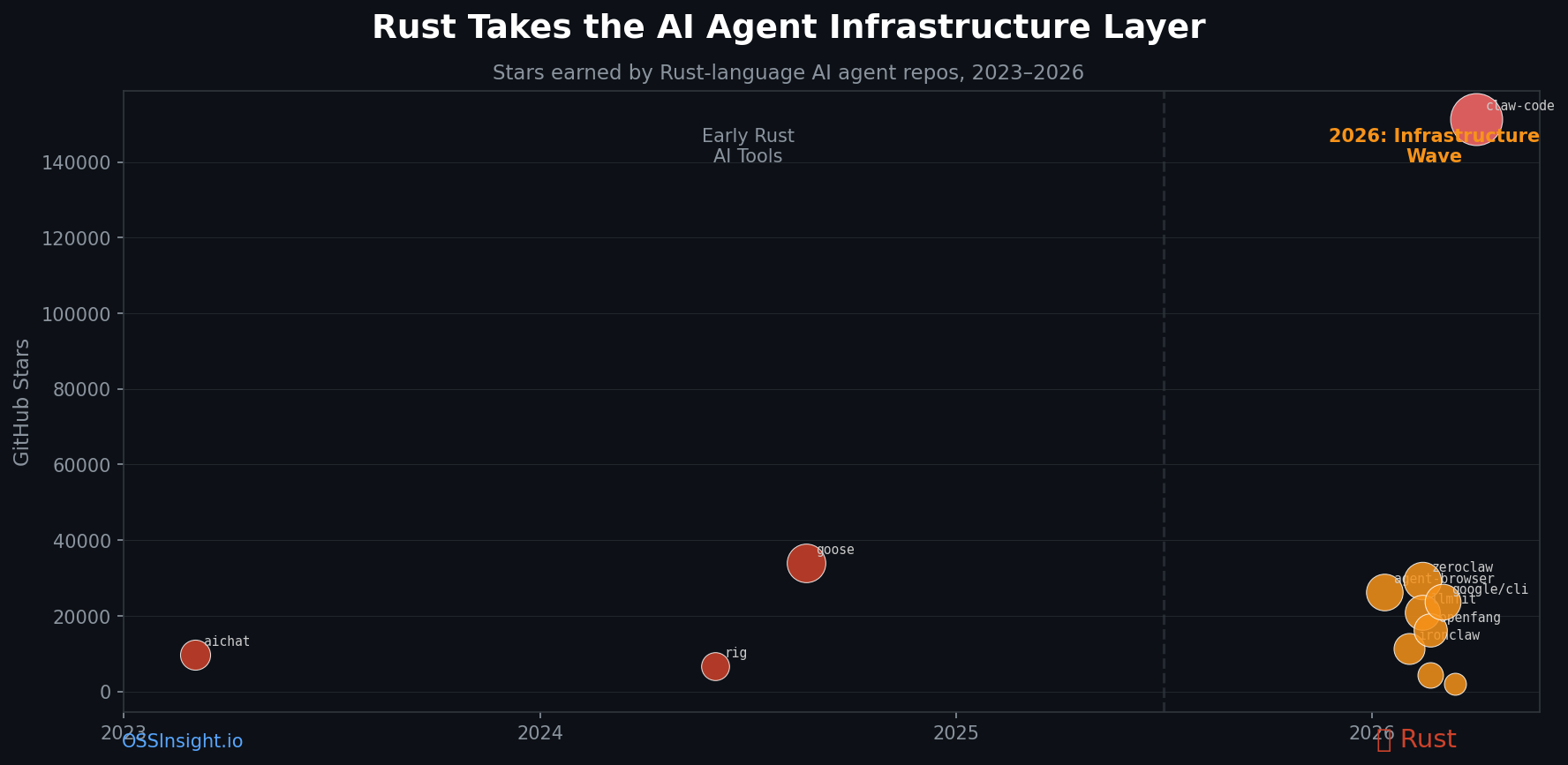

In Q1 2026, a pattern emerged across GitHub's fastest-growing AI agent repos: they're written in Rust. Not Python. Not TypeScript. Rust. Here's what the data shows — and why it matters.

A pattern emerged in early 2026.

While most AI discussion focused on models and prompts, the infrastructure teams — the engineers building agent runtimes, CLI tools, sandboxes, and security layers — converged on a language choice: Rust.

Not Python. Not TypeScript. Rust.

The pattern is visible in the GitHub star data if you know where to look.

The Data: Two Eras of Rust AI Tools

Era 1 — The Early Adopters (2023–2024)

Three Rust AI repos established the foundation before 2026:

| Repo | Stars | Created | Stars/Day | Category |

|---|---|---|---|---|

| sigoden/aichat | 9,740 | Mar 2023 | 8.6 | LLM CLI |

| 0xPlaygrounds/rig | 6,758 | Jun 2024 | 10.1 | Agent framework |

| block/goose | 33,979 | Aug 2024 | 57.8 | Agent runtime |

These were impressive but niche — Rust enthusiasts building LLM tooling. goose at 57.8 stars/day was exceptional.

Era 2 — The 2026 Infrastructure Wave

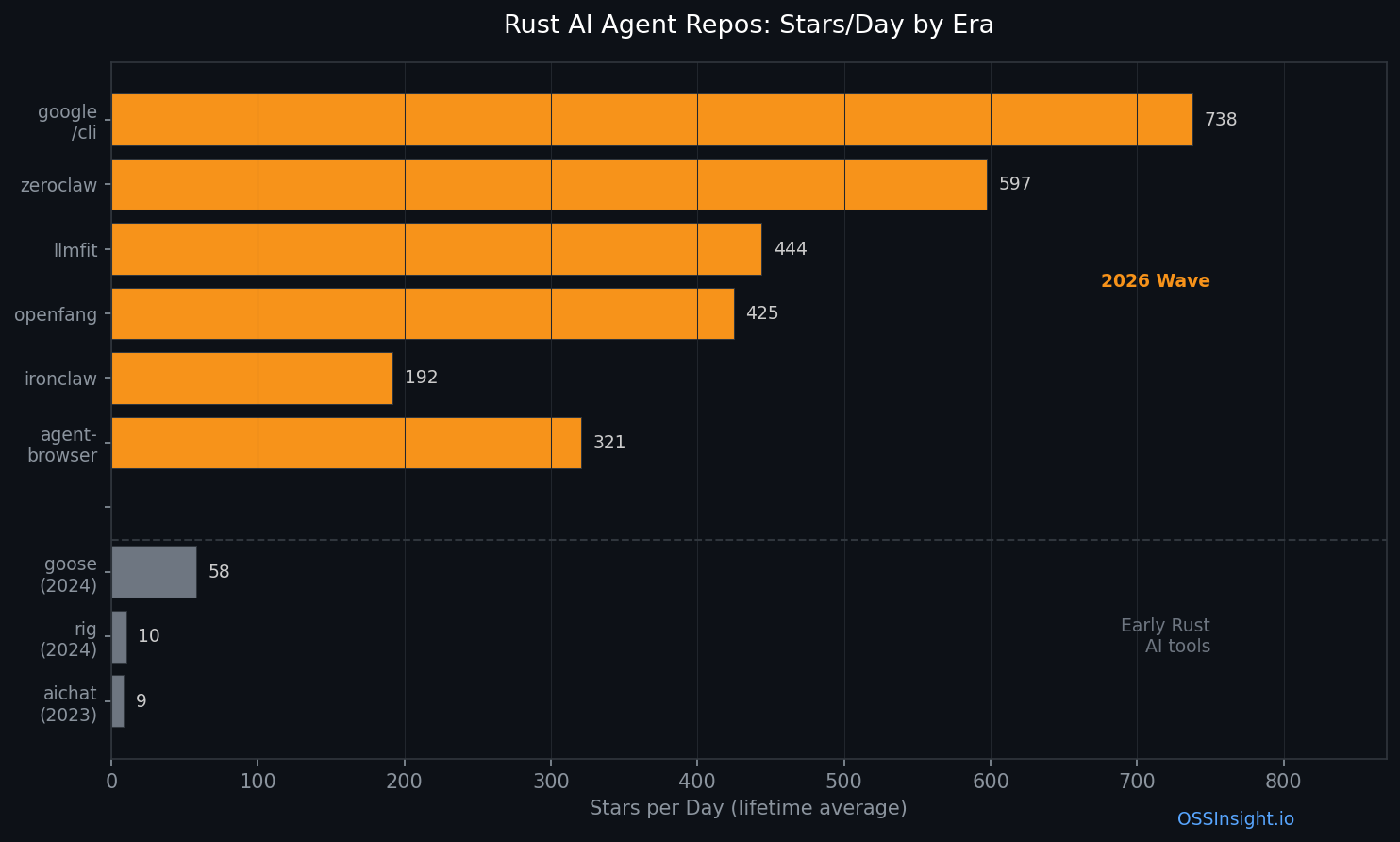

Then Q1 2026 happened. Seven significant Rust AI agent repos launched in under 60 days:

| Repo | Stars | Created | Days Old | Stars/Day | Fork Ratio |

|---|---|---|---|---|---|

| vercel-labs/agent-browser | 26,316 | Jan 11 | 82 | 320.9 | 6.0% |

| nearai/ironclaw | 11,325 | Feb 3 | 59 | 191.9 | 11.4% |

| zeroclaw-labs/zeroclaw | 29,272 | Feb 13 | 49 | 597.4 | 14.2% |

| AlexsJones/llmfit | 20,856 | Feb 15 | 47 | 443.7 | 5.9% |

| RightNow-AI/openfang | 16,145 | Feb 24 | 38 | 424.9 | 12.5% |

| NVIDIA/OpenShell 🏢 | 4,341 | Feb 24 | 38 | 114.2 | 10.4% |

| googleworkspace/cli 🏢 | 23,611 | Mar 2 | 32 | 737.8 | 4.9% |

Data: GitHub REST API, April 3, 2026. 🏢 = corporate-backed repo (star velocity may reflect brand amplification, not just organic community growth).

Every single one: Rust.

The velocity jump is stark: Where 2023-2024 Rust AI tools averaged 25 stars/day, the 2026 wave averages 404 stars/day — a 16× increase. Even excluding the two corporate-backed repos (NVIDIA, Google), the five community-driven projects average 395 stars/day — the trend holds regardless.

Why Rust? The Three Reasons Engineers Keep Citing

1. Agents Touch Real Things Now

The early Python agent frameworks (LangChain, AutoGPT, CrewAI) operated within Python. They called APIs, returned strings, orchestrated other Python code. The blast radius of a bug was contained.

2026 agents are different. They run shell commands. They manage files. They spawn processes. They control browsers. They execute with --permission-mode bypassPermissions.

When an agent has root access and runs autonomously, memory safety isn't optional. Rust gives you process isolation, predictable memory layout, and compile-time guarantees that Python's GIL never will.

NVIDIA/OpenShell describes itself as "the safe, private runtime for autonomous AI agents." The fact it's Rust isn't incidental — it's the thesis.

Beyond the main seven, smaller repos reinforce the point. sheeki03/tirith (2,108 stars, Rust) exists entirely because Python agent toolchains are vulnerable to "homograph URLs, pipe-to-shell, ANSI injection, obfuscated payloads, data exfiltration." You don't need terminal security middleware if the runtime itself catches these at compile time.

2. Cross-Platform Deploy-Anywhere Is a Hard Requirement

Look at these descriptions side by side:

"Fast, small, and fully autonomous AI personal assistant infrastructure, ANY OS, ANY PLATFORM — deploy anywhere, swap anything" — zeroclaw-labs/zeroclaw

"IronClaw is OpenClaw inspired implementation in Rust focused on privacy and security" — nearai/ironclaw

"Sub-millisecond VM sandboxes for AI agents via copy-on-write forking" — zerobootdev/zeroboot (1,874 stars)

These aren't web apps. They're ambient infrastructure meant to run on MacBooks, Raspberry Pis, headless servers, and embedded chips. Rust compiles to a single static binary with no runtime dependencies. Python requires a virtual environment, pip, and careful dependency management.

3. Performance Is Load-Bearing at Agent Scale

When one human runs one Claude Code session, Python overhead is invisible. When you're running 50 parallel agent workers doing file I/O, subprocess spawning, and MCP tool calls in a hot loop — latency compounds.

AlexsJones/llmfit (20,856 stars) runs hardware benchmarks across hundreds of models to find what fits on your GPU. That's compute-intensive profiling. zerobootdev/zeroboot (mentioned above) claims "sub-millisecond VM sandbox creation via copy-on-write forking." These performance claims aren't marketing — they require a language that can make them.

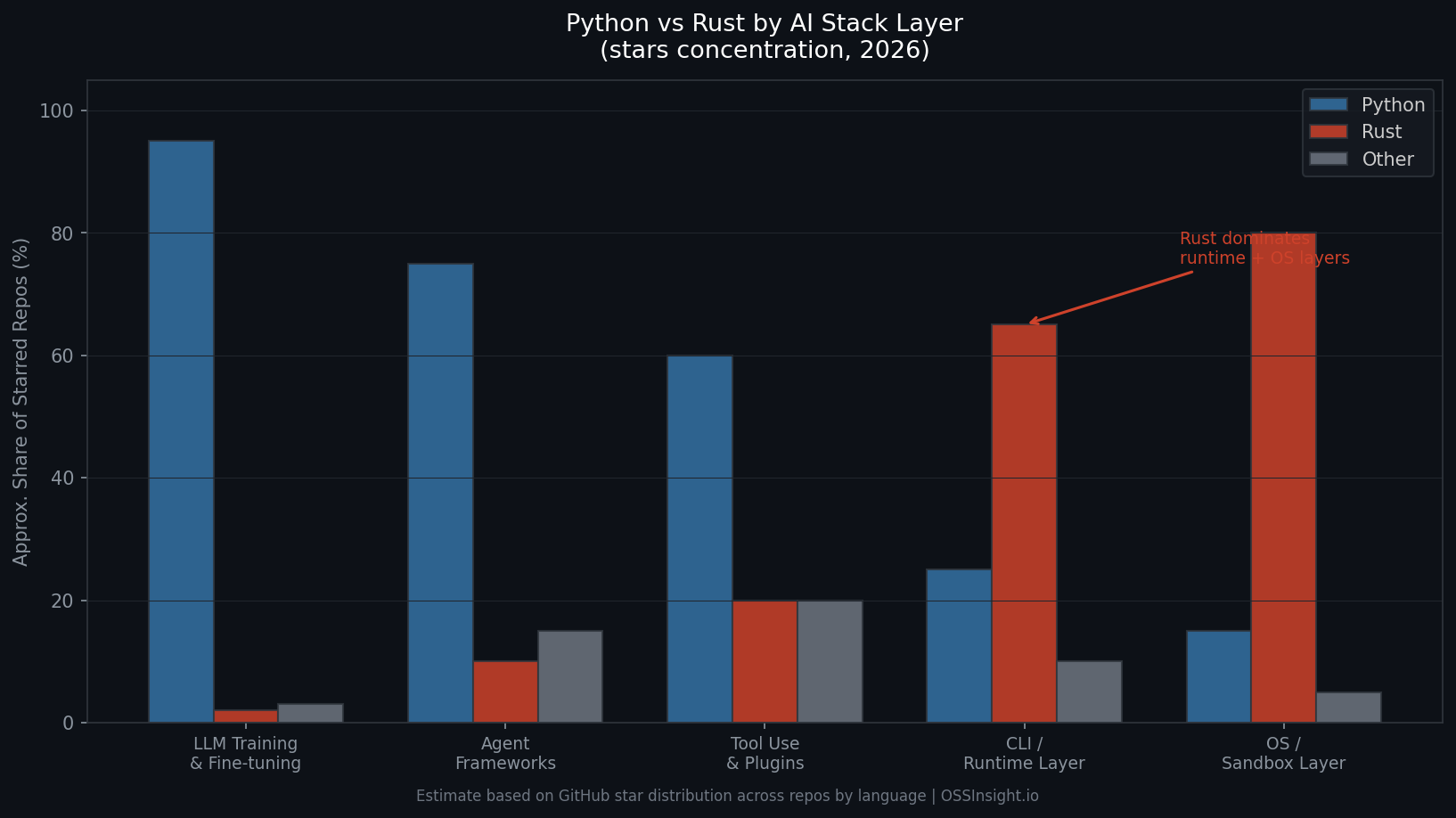

The Stack Is Splitting by Layer

The interesting finding isn't that Rust is replacing Python for AI. Python still dominates model training, fine-tuning, and most agent frameworks.

The finding is that the stack is splitting by layer:

| Layer | Dominant Language | Why |

|---|---|---|

| LLM Training / Fine-tuning | Python | PyTorch ecosystem lock-in |

| Agent Frameworks | Python (with TypeScript growing) | Rapid experimentation, library access |

| Tool Use / Plugins | Mixed | Depends on tool |

| CLI / Runtime Layer | Rust | Speed, single binary, cross-platform |

| OS / Sandbox Layer | Rust | Memory safety, process isolation |

The bottom two layers — where agents actually execute things — are flipping to Rust. The top layers stay Python.

This is the same split that happened in web infrastructure: Python/Ruby for application code, Rust/C++ for databases, proxies, and runtimes (Nginx, Redis, Kafka). AI infrastructure is following the same maturation curve.

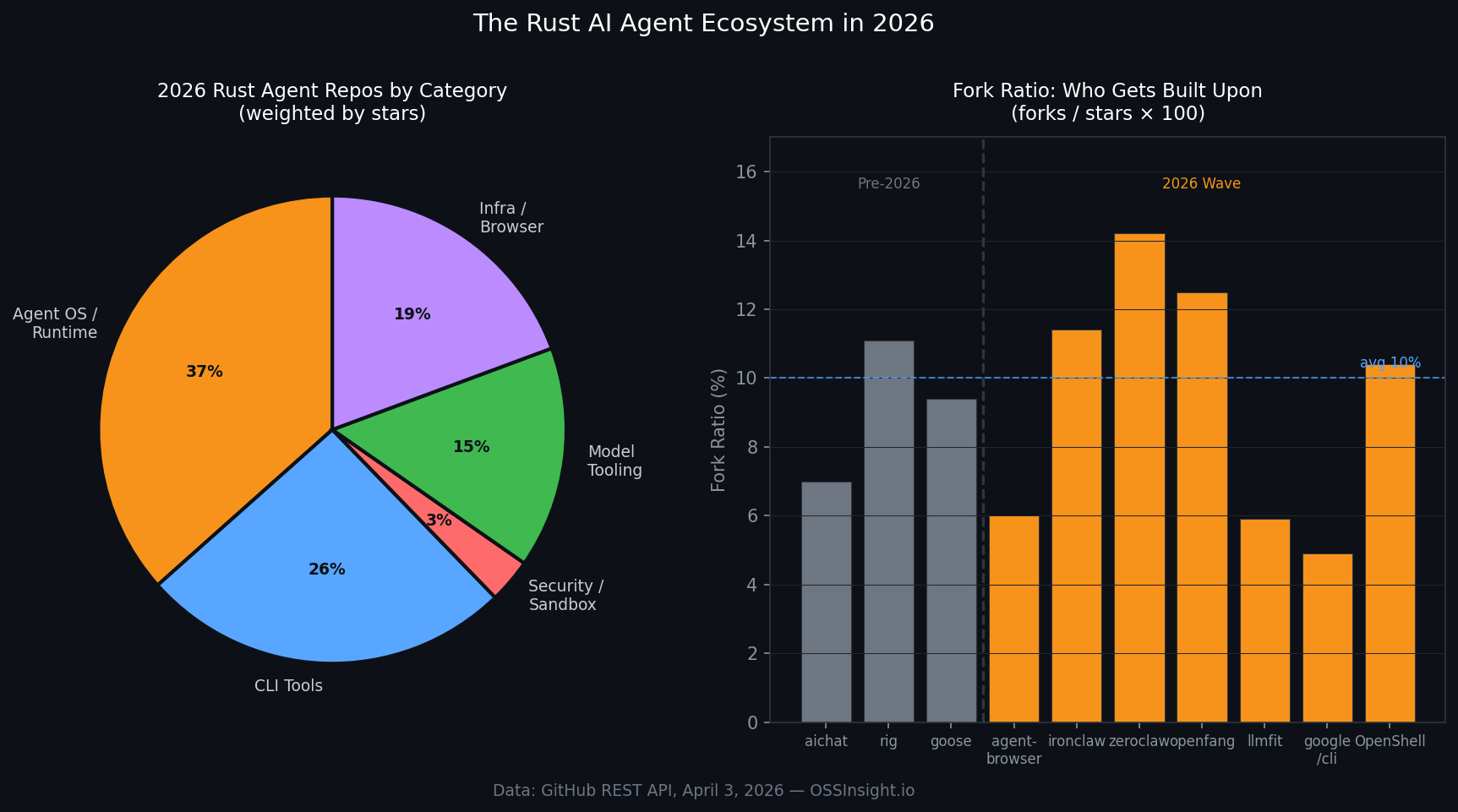

The Ecosystem at a Glance

The seven repos from the main table cluster into four categories (by share of combined stars):

- Agent OS / Runtime (~38%): zeroclaw, openfang, OpenShell — ambient agent infrastructure for any device

- CLI Tools (~26%): googleworkspace/cli, ironclaw — making everything agent-accessible

- Browser Automation (~20%): agent-browser — headless browser control for autonomous agents

- Model Tooling (~16%): llmfit — hardware matching for model deployment

Fork ratios reveal which repos are becoming platforms. High fork ratios signal repos people build on, not just star:

- zeroclaw: 14.2% fork ratio — people are customizing their own agent infrastructure

- openfang: 12.5% — same pattern

- ironclaw: 11.4% — derivative security-focused variants

Compare to googleworkspace/cli at 4.9% — that's a finished tool you use, not a base you fork. The high-fork repos are becoming what Python's langchain was in 2023: a foundation layer others build on top of.

What This Means

For developers: If you're building infrastructure that agents run on — not agent logic, but agent execution environments — the community has chosen Rust. Launching in Python for a runtime-layer tool is now a red flag, not a neutral choice.

For enterprises: The same pattern that drove companies to Kubernetes (written in Go) and then to Rust-based cloud native tools is repeating. Rust's memory safety story is a genuine competitive advantage when agents run with elevated permissions.

For the ecosystem: We're watching the AI stack mature in real time, following the same language stratification that databases and web infrastructure went through over the previous decade. Python is the scripting layer; Rust is the runtime layer. The line is being drawn in GitHub star data, right now.

The 2026 wave didn't happen by accident. It happened because agents started doing things, and doing things at scale requires infrastructure that can be trusted.

Rust is what you reach for when you want trust built in.

All star counts verified via GitHub REST API on April 3, 2026. Stars/day calculated from repo creation date to today. Fork ratios from forks_count / stargazers_count.

Explore these repos live: